Brief

Augmented Reality (AR) is an exciting space but like any other tool, the industry at large are still to overcome many interesting challenges in order to create something of value for the user.

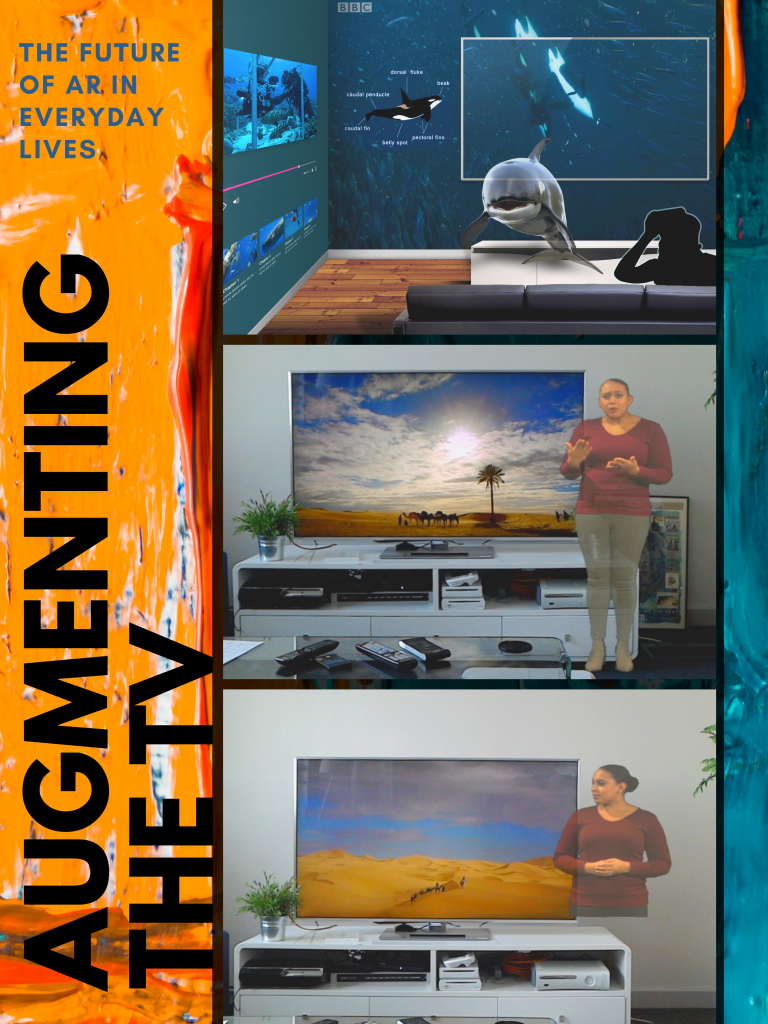

In this project, I was interested in exploring the potential of extending the screen area of connected TVs, using an optical HMD (HoloLens), beyond the rigid frames of display with the view to using the additional space to present relevant augments to a TV programme thereby creating an ARTV experience.

There were two problems to investigate:

a) the design of the augments that best suited the format and function of the experience,

b) the evaluation of the whole ARTV experience to explore users preference and the usablity of the whole concept.

Design Research Question

- How can we augment programmes on a connected TV through the use of augmented reality technologies?

- How do we go about designing a usable ARTV experience?

- How do users respond to an ARTV experience?

Scope

TV programmes come in different flavours and with a variety of functions. Within the scope of this project, I wanted to investigate the potential of using AR to personalise TV access services – more specifically sign language interpretation.

Context

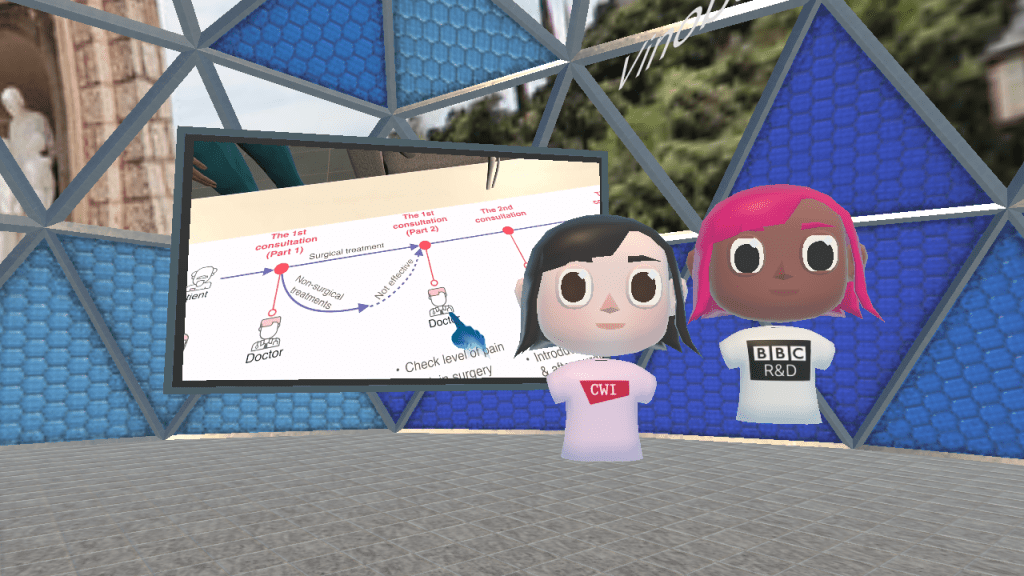

In collaboration with industry partners, I (at BBC R&D) and IRT have been exploring how to customise and personalise the experience of viewing programme content on connected TVs by, in tandem, delivering additional (companion) content, to personal devices via IP.

In ARTV, the screen extends to the immersive 3D space around the user. Traditional access services undoubtedly enhance our TV programme offerings for many users but they are an intrusive option which can’t be personalised or controlled beyond turning them on/off.

- How might we allow users watching TV in a group, personalise their viewing experience without imposing their preferences on the whole group?

Moving the signer off the TV allows users to view the content in the way it was intended to be watched and allows service providers to personalise the singer to the users’ preference. Additionally, accessiblity is one use case for which it might be perfectly acceptable to ask users to watch TV with an optical HMD strapped to their heads without having to roll out an elaborate set of justifications.

Design Approach

The design of traditional in-vision signage provided guidance on the creation, capture and presentation of the AR sign language interpreters. Several transferrable pieces of knowledge were identified from the UK-based industry regulatory authority Ofcom’s ‘Code on Television Access Services’, and prior work in organisations such as the BSL Broadcasting Trust and the Deaf Studies Trust.

Prior work revealed the importance of the interpreters’ relationship with the content. In addition to ‘ease of shifting attention’, a relationship can be demonstrated via the direction of the interpreters’ gaze and their synchronised interaction with the TV content. This was tackled during the capture process. After a few variations of chroma-keyed sign language interpretation videos were created, a colleague with BSL skills was shown different combinations of the sign language interpreter cutout videos through the Hololens synchornised to the corresponding programme on a connected TV. The aim was to create a signed AR experience that maintained a ‘connection’ between the AR interpreter and the content, and in-turn the ‘continuity’ between the user, the AR interpreter and the content. Given the limited field of view of the HoloLens, it was important to ensure the whole ARTV experience was viewable through the HoloLens without the user having to move their head.

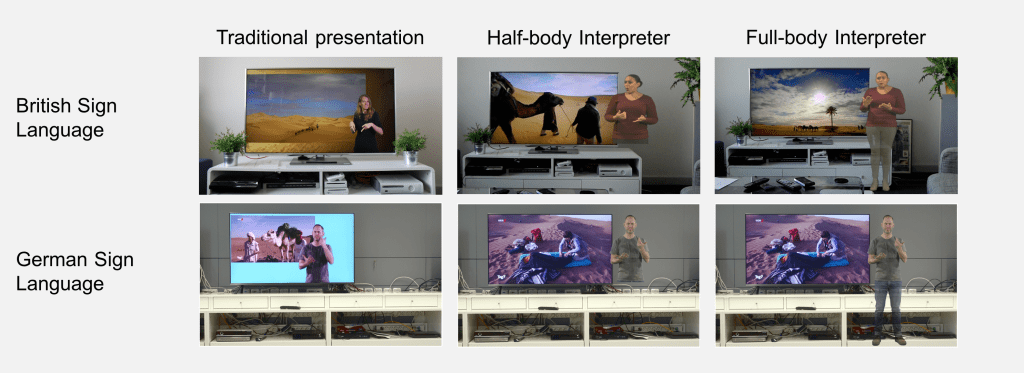

In addition to checking for quality of ARTV experience, we also settled on two designs that seems to work for an AR sign language interpreted TV programme experience: a ‘half-body’ and a ‘full-body’ AR interpreters. These two designs also seemed diverse enough to not be seen as slight variations of the same thing. Full details of the design process and key principles we used to create the final designs are available in my work-in-progress paper. A summary of the guidelines used in this project for designing AR sign language interpreters for the TV are:

- The heads of AR interpreters have to be aligned to the top of the TV,

- The bottom edge of ‘half-body’ AR interpreter have to be aligned with the bottom of the TV; almost sitting on the TV stand,

- The feet of the ‘full-body’ AR interpreter grounded in the physical room,

- The direction of gaze of the interpreter has to be horizontally facing towards TV while in resting phase and has to be directly facing towards the viewer during the interpreting phase, and

- The interpreter needs to be positioned slightly overlapping the TV.

- All designs were placed on the right hand side of the TV frame.

Evaluative Approach

I designed and organised an evaluative within-group study to gather user response to the two designs for AR interpreters (presented through a Hololens) in comparison to the traditional invision (picture-in-picture, control condition) method of watching signed content. The study was conducted in the user laboratory at BBC R&D, and mirrored in IRT, with three goals.

- Gain an early understanding of how members in the BSL and DSG communities respond to accessing sign language interpretations through the HoloLens in AR,

- If there are any differences due to the way sign language interpretations are consumed across two cultures and

- Confirm the reproducibility of our design approach and methodology of our ongoing studies.

We invited hard of hearing users (in the UK & Germany) to watch natural history documentaries in our user labs. Throught the help of a sign language interpreter, I was able to adminsiter feedback survey in between the user viewing the three conditions to get an idea of usablity from the users. At the end of the session, I conducted a semi-structured interview to get their feedback of comparing the conditions with each other.

PS:

In summary, the participants revealed that the demands made by deaf viewers on the sign-language service are very individual. Participants in our study highlighted the importance of control when it comes to personalising the experience to their needs.

Personalisation is key! The details from the study can be read in my CHI paper while the design process can be read in my TVX 2018 work in progress paper. I also summarised the project in a blog post for CHI 2019. I have other collaborative papers on AR and TV which can be read in a TVX 2019 paper and a TVX/IMX 2020 paper (where it got an honorable mention for best paper).

You must be logged in to post a comment.